Micron: Future cars will require 300GB of memory

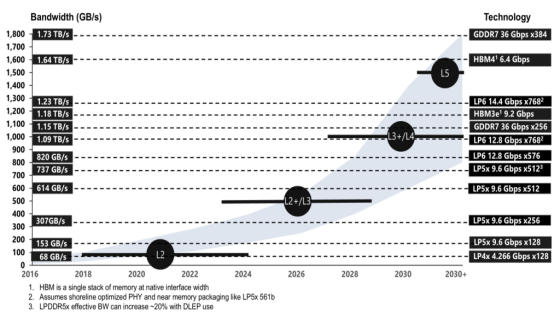

Sanjay Mehrotra, CEO of Micron, stated that as car companies launch models with Level 4 autonomous driving capabilities, cars will ultimately require more than 300GB of RAM. According to The Register, Mehrotra made this statement after Micron released its quarterly financial report. The report showed that Micron's revenue in the second quarter of this year reached $23.86 billion, a 200% increase from the $8.03 billion in the second quarter of fiscal year 2025. This significant growth was still primarily driven by strong demand for high-end HBM chips from AI hyperscale cloud vendors, and also benefited from "structural supply constraints, as well as Micron's strong execution across all business levels".

As the company reaps substantial profits from the construction of AI infrastructure, it also plans to expand its production capacity by building multiple wafer fabs in Japan, Singapore, and New York. These projects are expected to commence production between 2028 and 2029. The CEO of Micron stated that the company aims to increase its production capacity by 20% by 2026, which is expected to alleviate some supply-side pressures. However, even after these new factories commence production, the CEO predicts that there will be a new market in the future that demands a significant amount of high-speed memory-autonomous vehicles.

Vehicle autonomous driving is divided into six levels. Among them, L0 refers to vehicles that do not have any driving automation function at all; vehicles equipped with only a single automation system (such as cruise control) belong to L1; and those equipped with advanced driver assistance systems (ADAS) that can simultaneously control steering and acceleration/deceleration, such as Tesla Autopilot and Cadillac Super Cruise, are classified as L2. On the other hand, vehicles with L4 autonomous driving capabilities basically do not require human intervention in tasks such as overtaking and judging when to pass through busy intersections. However, such vehicles still retain the option for the driver to take over and drive manually.

1.The new automotive architecture is disrupting the choice of processors and memory

The surge in data from assisted and autonomous driving sensors, coupled with the need to make real-time decisions based on this data, is placing unprecedented demands on in-vehicle memory and storage subsystems.

As more and more mechanical functions are replaced by electronic systems and the level of vehicle intelligence continues to improve, some challenges faced by the automotive industry are echoing those in large data centers. In order to prioritize critical functions such as automatic braking, lane centering, reversing camera image processing, and suspension adjustment, data transmission within and between processing units and memories must achieve extremely high speeds.

At the same time, these vehicles also incorporate a range of critically different functions. For instance, certain features within the infotainment system are crucial for alerting the driver, while others are not. The challenge lies in managing the entire vehicle as a single system, while also viewing it as a "system within a system" where certain functions have higher priority than others. The best way to address this issue is to increase bandwidth, reduce latency, and more precisely delineate which components need to be deployed in which locations, using which manufacturing processes, and at what corresponding costs.

David Fritz, Vice President of Hybrid Physical and Virtual Systems in the Automotive and Military Aerospace Sector at Siemens EDA, said, "When we talk about things like 10Gb on-board Ethernet with guaranteed quality of service, traditional automotive engineers will say, 'How can I guarantee that this signal can really reach the braking system within 100 milliseconds?' My answer is, 'Do you see that building two blocks away? If you take a twisted-pair Ethernet cable, run it around the building, and then pull it all the way back here, there may be only a few microseconds of delay, while you are worried about milliseconds.' This is because the transmission rate is too high. Even if some arbitration occurs in the middle, you have enough time to resolve it. So, when the system is busy, the concern about whether my data can still get from point A to point B fast enough basically no longer exists. And the concern about how to divide the 1.5Mb/s CAN bus to ensure that data can arrive on time also disappears. This is the difference between megabits and gigabits. "

This can have a significant impact on the overall vehicle design. Fritz said, "If you want to send a high-priority data packet from point A to point B in the network, and the network is very busy because it is transmitting a video frame - nowadays, some OEMs have installed 16 or 20 cameras outside the vehicle - you will want to process this data as close to the edge of the vehicle as possible. This can reduce bandwidth requirements.".

Chinese OEMs understand that if they send data from 20 high-resolution cameras at once, even in the event of a collision, the system can still process the data, store frame images, and analyze them through AI. They can quickly feed the data to AI algorithms because they now have a large number of SoCs, with latency in the nanosecond or even picosecond range; whereas their competitors only have a few ECUs, and if they are lucky, these independent ECUs only have a few megabit communication channels between them. Ultimately, it's about designing a car like designing a SoC. "

This also enables automakers to utilize multiple processing units and memories, focusing on where the highest performance is truly needed, while considering where specifications can be downgraded, how much energy consumption is required for different functions, and what the overall cost will be.

Amir Kia, Senior Product Manager at Imagination Technologies, said, "Traditionally, these functions relied on MPUs or DSPs, but now there is increasing interest in utilizing GPUs for some of these tasks. For instance, in the context of cockpit infotainment and in-vehicle displays, many companies are already using GPUs. Developers have realized that the flexibility of GPUs enables them to efficiently handle both computational and graphical tasks. Instead of integrating additional accelerators, they see the value in extending the capabilities of existing GPUs to manage infotainment and enhance computational performance, thereby reducing system overhead. This shift also creates opportunities to use smaller MPUs in these systems or minimize reliance on DSPs."

2. Towards software-defined vehicles

For automakers, many of these changes are foundational, and they only began to shift their focus from ECU (Electronic Control Unit) to a software-defined approach in the past decade. The advantage of this is that different systems and subsystems can be designed like modules in a SoC, and then integrated in any reasonable location and in any reasonable way. Conversely, this also makes it easier to determine where and how much bandwidth is needed, how much memory capacity is required, which type of memory is more suitable for which location, and which data has the highest priority.

Kia said, "Everyone is striving to shift to a more centralized architecture. We are currently using many distributed ECUs, and we hope to transition to a more centralized infrastructure. Some customers' platforms have a huge amount of computation, so there is a lot of real-time data coming from sensors and display systems. Some customers have six cameras, while others have eight to twelve cameras, all transmitting simultaneously. Therefore, there is a lot of high-speed data exchange within the system, and they are trying to integrate all of this into a single SoC for processing.".

A software-defined vehicle is distinct from a set of functionally dedicated ECUs. While different systems must fulfill their respective functions as required, the central logic in such vehicles is also capable of making real-time decisions involving multiple systems. However, to achieve this, the correct data must be accessible so that the systems can act accordingly.

Adiel Bahrouch, Director of Business Development for Rambus Silicon IP, stated: "High-resolution sensors, AI accelerators, and safety-critical loads will all converge on shared memory and storage subsystems. Without sufficient bandwidth, these subsystems will quickly become performance bottlenecks. If the memory cannot provide data to the computing engine quickly enough, chip utilization will decrease and latency will increase, which will directly affect safety and user experience. A layered memory and storage architecture - from ultra-fast on-chip storage to high-capacity persistent storage - can ensure that each workload achieves the right balance between bandwidth, latency, capacity, and cost, ultimately enabling safe, responsive, and feature-rich vehicles.".

As these architectural changes reshape the automotive industry, the choice of memory technology becomes increasingly important. Michael Basca, Vice President of Products and Systems at Micron Technology, pointed out: "When you progress from L3 to L4 and beyond, the complexity, sophistication, and efficiency of models remain the focus of OEMs. We have all seen some driverless taxis get stuck in certain traffic scenarios, so it is clear that we have not yet reached the point where models can handle various extreme edge cases. At least from the storage side, these models are likely to continue to grow larger in the coming period, while becoming more efficient is a longer-term goal."

From a more detailed perspective, the type of memory adopted by electric vehicles depends on how critical the response time is, the target market segmentation, and the power source of the vehicle. In pure electric vehicles, running farther is a competitive advantage, and spending less electricity on data transfer can extend the battery life. Therefore, despite the larger capacity of GDDR, LPDDR6 may be sufficient for certain functions.

Frank Ferro, Director of Cadence Silicon Solutions Group, said, "LPDDR memory first gained popularity because it provided higher bandwidth than DDR. The initial LPDDR4 had a bandwidth of about 4Gb/s. But with LPDDR6, we have increased the memory bandwidth all the way to 14.4Gb/s.".

This is the first point - you gain a lot of bandwidth, and of course, low power consumption is equally important. Another point is that it can also provide a larger memory capacity. The capacity of LPDDR6 is not as large as DDR, but in automotive applications, as we enter the ADAS (Advanced Driver Assistance System) and AI inference stages, capacity is becoming very important. LPDDR6 provides a balance between memory capacity and memory bandwidth, and it seems to meet many needs in the automotive field.

However, Level 4 and Level 5 autonomous driving introduce some new variables to this equation. Daryl Seitzer, Chief Product Manager of Synopsys' Embedded Memory IP, stated, "Increasing on-chip memory capacity and bandwidth to support advanced functionalities primarily involves trade-offs in terms of increased chip area and power consumption, which can affect thermal management and reliability. Designers must strike a balance between performance requirements and energy consumption, as well as area constraints, often resorting to low-voltage operation and architectural optimization."

Furthermore, as in-vehicle language models become increasingly complex, manufacturers find themselves requiring greater memory capacity and higher bandwidth, while also striving to strike a balance between this and cost. Ferro said, "Taking Tesla as an example, you might find four LPDDR memories. They originally thought, 'We can use less GDDR,' but now they are actually using the same amount in terms of capacity. So many customers are considering switching to LPDDR6 because they now need this capacity, as well as other benefits of LPDDR.".

High-bandwidth memory, also known as stacked DRAM connected via through-silicon vias (TSVs), is currently not applicable to the automotive field due to reliability issues related to TSVs and vibration. However, as the demand for high-performance memory continues to grow, and in some cases even at the expense of low-cost memory options, it has certainly caught the attention of some companies.

Yu Yang, Chief Analyst of Automotive Semiconductors at Yole Group, stated, "The memory industry is highly concentrated, with a few leading manufacturers occupying a monopolistic position, and production capacity is shared with all other industries. Therefore, understanding the memory industry is crucial for OEMs aspiring to transform. A relatively recent example is the significant surge in DDR4 memory prices over the past few months, driven by AI demand, capacity shifts, or speculative behavior in the distribution channels."

According to Yole Group, the types and applications of memory in automotive applications currently include:

● DRAM (LPDDR4/5, GDDR6): used in ADAS domain controllers, central computing, intelligent sensors, and digital cockpit SoCs;

● NAND flash memory;

● eMMC/UFS: used for infotainment, vehicle networking, and ADAS software storage;

● NVMe SSD: used for emerging L3+ autonomous driving computing, as well as EDR/DSSAD storage;

● SLC NAND: used for vehicle networking, RF modules, and high-endurance logs;

● NOR flash memory: used for boot and security code in ADAS sensors, gateways, regional controllers, and MCUs;

● Other non-volatile memories (EEPROM, FRAM, nvSRAM): used for calibration data, configuration parameters, and low-density event logs.

A general rule of thumb is: DRAM is used for computation, NAND for data, and NOR for code.

3.Other memory types

DRAM is becoming faster and faster, while SRAM remains the preferred memory for those seeking the highest performance. However, other types of memory are also gradually entering automotive applications.

"SRAM supports real-time computing tasks, while MRAM and RRAM provide high density, low power consumption, and persistent storage, making them ideal for OTA updates, data recording, and configuration retention," said Seitzer of Synopsys. "These memory options meet the automotive industry's demands for optimal power efficiency, performance, and reliability.".

In addition, some data can be preprocessed and stored locally in the vehicle before being sent to the cloud for tasks that are less time-sensitive, such as analyzing vehicle behavior or updating map changes within a fleet.

Amit Kumar, Director of Product Marketing and Management for the Automotive Business at Cadence Tensilica, stated, "These data will not be immediately uploaded to the cloud. Instead, they will be stored for several hours or even a day, depending on which cloud service provider (AWS, Microsoft Azure, Google Cloud) is being used. Such data streams typically accumulate within the vehicle first and then undergo structured analysis in a data warehouse.".

Flash memory is particularly useful for this purpose. Seitzer said, "Flash memory (non-volatile, long-term) is still widely used in ECUs and central controllers. Non-volatile storage can retain data throughout the vehicle's lifecycle, providing persistent storage for firmware, logs, and security assets. When accessing off-chip data, interfaces such as eMMC, UFS, and PCIe for high-bandwidth applications are utilized. Security is ensured through encryption, authentication, and compliance with automotive security standards."

Every OEM decides on its own memory and storage architecture based on various options and target markets.

Carrie Browen, Product Manager of the Keysight EDA Software-Defined Automotive Business Line, stated: "Video recordings from external cameras, as well as internal cameras when enabled, can be used for 'fleet learning' to improve autonomous driving assistance and fully autonomous driving capabilities. These are typically short video clips related to safety incidents, such as collisions or airbag deployments. For example, Tesla describes different data categories, such as 'Autopilot Analytics & Improvements' and 'Road Segment Data Analytics', which are used to train and optimize driving assistance and navigation functions. Some data, such as dashcam footage and Sentinel mode storage (used to monitor whether there are threats around a parked vehicle), is processed locally in the vehicle unless you explicitly enable sharing. In practice, some data is stored on the vehicle, while some is stored in data centers controlled by Tesla and its partner facilities for AI training, services, and support operations.".

Today, high-speed DRAM is commonly used as near-computing memory, while flash memory and other non-volatile storage options provide data backup and redundancy. However, these boundaries are starting to blur.

Browen stated, "Future architectures will achieve greater flexibility by utilizing more hybrid memory hierarchies, integrating traditional DRAM and flash memory within a single module or package. For camera and sensor data used for AI improvement, annotation and review tools allow authorized employees and contractors to view videos and images to annotate objects and driving scenes. Past media reports on annotation operations have described such interfaces, but did not disclose their exact technical architecture."

4.Conclusion

Cars are becoming complex "systems within systems", integrating an increasing number of memory and processing units, as well as more advanced data transmission and storage methods.

Randy White, Program Manager of Keysight EDA Memory Solutions, stated, "In-vehicle computing demands, including infotainment and ADAS, are increasingly requiring higher memory bandwidth and larger capacity. Compared to cloud-based processing, the low latency brought by in-vehicle local inference ensures real-time processing and critical task timing.".

These are merely stepping stones on the path to fully autonomous driving. Given the trajectory of this technology's development, that day may not be too far away.